Toxicity in video games is brushed off by many as a foregone conclusion. “That’s just how video games are,” you’ll hear many people saying. Whether it’s an excuse to act like a jerk in an anonymous online capacity, or it’s just people giving up on what seems like an unwinnable battle, the idea that toxicity is an inescapable part of video games is something that Blizzard is remiss to accept.

The studio has been putting a number of measures in place to identify and punish toxic behavior in Overwatch. Until now, that process has relied on reporting by players and then manual review by an actual person. Blizzard wants to make that process more automatic by utilizing machine learning and algorithms that would detect toxic language and behaviors without players have to report incidents themselves.

“We’ve been experimenting with machine learning. We’ve been trying to teach our games what toxic language is, which is kinda fun. The thinking there is you don’t have to wait for a report to determine that something’s toxic. Our goal is to get it so you don’t have to wait for a report to happen,” Kaplan told Kotaku in an interview.

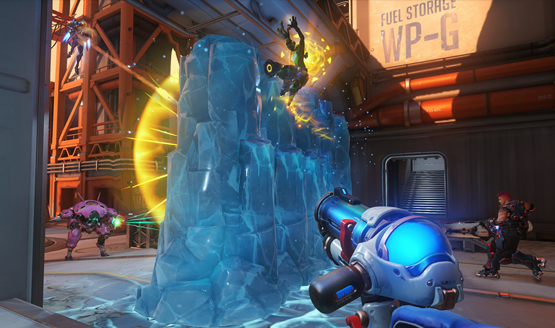

This is the next step in a process that is already yielding fruit. In January, Kaplan announced that with the new reporting and punishment systems in place, abusive chat dropped by 17% and people are using those same reporting systems 20% more now. While Blizzard is currently teaching Overwatch toxic language to look out for, they are hoping that they can teach the game about toxic behaviors in the game. “That’s the next step,” said Kaplan. “Like, do you know when the Mei ice wall went up in the spawn room that somebody was being a jerk?”

The biggest worry about using algorithms and machine learning to combat Overwatch toxicity is context. Is the language from a stranger or a friend? Was that Mei ice wall an attempted strategic move, or was the player genuinely trying to be a jerk and inhibit the fun of other players? Kaplan addressed this by saying that the current AI learning is being taught to go after the most obvious and abhorrent examples of toxic behavior. It can also be set to initially flag the behavior for review, with punishment only being handed down with repeat occurrences.

In addition to reporting and punishing toxicity, Kaplan wants to turn that around and reinforce positive behavior in people as well. “We can start looking toward the future and talking about things like, what’s the positive version of reporting?” said Kaplan. “Reporting is saying ‘Hey, Adrian was really bad and I want to punish him for that,’ but what’s the version where I can say ‘That Adrian guy was an awesome teammate and I’m so glad I had him’? We’re punishing the bad people, so how do we get people to start thinking about being better citizens within our ecosystem?”

As Blizzard succeeds in both punishing bad behavior and rewarding positivity, we’re sure to see games reach a turning point where toxicity isn’t seen as a foregone conclusion that can’t be fought. Developers retain control of their own online environments, and each one of them is handling player behavior in different ways. Blizzard fighting Overwatch toxicity might be at the frontlines, but there’s an entire industry full of games and players that could learn lessons from Blizzard’s experimentation.

[Source: Kotaku]